Situation can usually be alleviated though with some sane WHEN conditions in trigger declarations. Row level BEFORE triggers are much more “cheaper” then AFTER triggers when updating a lot of rows, as they fire immediately vs at the end of the statement in which case Postgres needs to temporarily store the row state information.When you have many triggers on a table the execution order happens alphabetically by trigger name! Additionally in case of BEFORE and INSTEAD OF triggers, the possibly-modified row returned by each trigger becomes the input to the next trigger.And in Postgres 10 they were also extended with the “transition tables” feature, allowing you to inspect all rows changed by the statement to possibly do some summary aggregations or validation. They’re actually the default even if you don’t specify the level. Also there are “statement level” triggers that are executed only once per SQL.* Did you know that in Postgres one can also write DDL triggers so that you can capture/reject/log structural changes for all kinds of database objects? Most prominent use case might be checking for full table re-writes during business hours. Pgbench default vs with 3 “insert logging” triggers on updated tables Single table update vs with 1 “insert logging” trigger Single table update (pgbench_accounts) vs with 1 audit trigger written in “C” Single table update (pgbench_accounts) vs with 1 audit trigger Pgbench default vs with audit triggers for all 3 updated tables For reference – full test script can be found here if you want to try it out yourself. Very tolerable penalties again – almost doubling the dataset here and only paying a fraction of the price! This again shows that actually the communication latency and transaction mechanics together with the costly but essential fsync during commit have more influence than a bit of extra data itself (given we don’t have tons of indexes on the data of course).

Here we basically double the amount of data written – all updated tables get a logging entry (including pgbench_accounts, which actually gets an insert already as part on normal transaction).

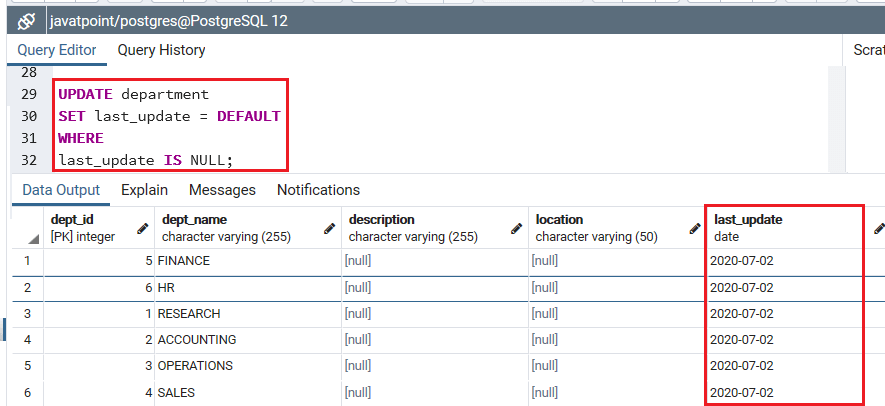

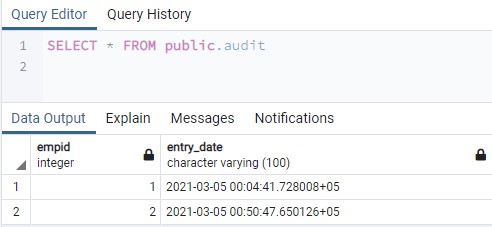

Not too bad at all given we double the amount of data! Default pgbench vs 3 “logging insert” triggers By the way, to test how pgbench works with prepared statements (used mostly to test max IO throughput) set the “protocol” parameter to “prepared“. Important to note though – although were seeing some slowdown…it’s most probably still faster that doing that insert from the user transaction as we can space couple of network bytes + the parsing (in our default pgbench case statements are always re-parsed from text vs pl/pgsql code that are parsed only once (think “prepared statements”). So basically I was doing from the trigger the same as the insert portion (into pgbench_history) from the default pgbench transaction. Here things get a bit incomparable actually as we’re adding some new data, which is not there in the “un-triggered” version. Single row update with a trigger doing “logging insert” Rettuple = heap_modify_tuple_by_cols(rettuple, tupdesc, Valbuf = (char*)SPI_getvalue(rettuple, tupdesc, attnum) The triggers will just set the last modification timestamp to current time and username to current user, if not already specified in the incoming row. This was the initial test that I ran for the original blog post – default pgbench transactions, with schema slightly modified to include 2 auditing columns for all tables being updated, doing 3 updates, 1 select, 1 insert (see here to see how the default transaction looks like) vs PL/PgSQL audit triggers on all 3 tables getting updates. Default pgbench vs audit triggers for all updated tables Please read on for some extra info on the performed tests or just jump to the concluding results table at end of article. So, to get more insights I conjured up some more complex trigger use cases and again measured transaction latencies on them for an extended period of time.

But in which use cases would it make sense to start worrying a bit? The conclusion for the most common “audit fields” type of use case was that we should not worry about it too much and just create those triggers. In my last post I described what to expect from simple PL/pgSQL triggers in performance degradation sense, when doing some inspection/changing on the incoming row data.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed